Researchers at The Ohio State University (OSU), Columbus, are perfecting a security algorithm that views and analyzes the way people move on campus. Developed by James W. Davis, Professor of Computer Science and Engineering at the university, the system combines video cameras with machine learning methods, enabling the computer to perform the kind of visual recognition that seems effortless for humans. It "remembers" typical traffic patterns and spots anomalies. It will be designed to flash on a stumbling or wandering person that may look questionable to security personnel: are they having a stroke? Are they drunk? Are they lost?

First implemented in the fall of 2009, the OSU smart system employs standard security cameras to scan views of thousands of people and identify potential problems. Since most people do the same thing at the same time—picture a scenario of people leaving work at 5 p.m., moving purposefully throughout a parking garage toward their vehicles—the computer scans for unusual behavior.

"The system uses activity analysis and behavior analysis," explained Davis. "If I am interested in a particular individual or aggregation, I can push that individual into the program and get a value of how typical their activity pattern is." The longer the system runs, the more astute it becomes. The system does not define a person's problem—only that they are acting abnormally. "We care what you do, not who you are," Davis continued.

Not a replacement

Despite the systems many benefits, Davis confirmed that the system in meant to serve as an aid for those security personnel surveying the campus for any potential suspicious or harmful activity on-site.

"We are not trying to replace the human at the desk," said Davis. "But for a security guard trying to follow 100 cameras, this can be used to detect the onset of abnormal behavior and allow security to intervene."

The project has been funded by the National Science Foundation (NSF), Arlington, Va., and the Air Force Research Laboratory (AFRL), headquartered at the Wright-Patterson Air Force Base, Ohio.

And while Davis confirmed they have good algorithms for modeling normal behavior in a specific area, they are working to include algorithms for reliably detecting abnormal behavior.

"We have models of common pathways," said Davis. Once they work out individual abnormal behavior patterns, they will move to analyzing group behavior—the interaction of individuals or groups of people—which is a big challenge.

"We are trying to fit groups versus non-groups into the algorithm," explained Davis. Then will come tracking in a dense crowd. "That's not yet ready for prime time," he said, noting the difficulty of tracking a person who goes behind a truck to reappear elsewhere, or the problem the program has when it sees a person from the front at one moment and from behind the next.

The big picture

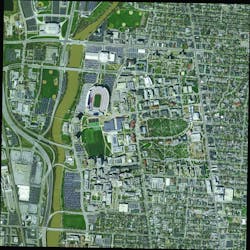

The application gives security officers the view (similar to Google Earth) and control that most central security stations only dream of. If the operator wants to access a particular local view in the scene, there is no delay. "Security simply has to click on the position in the global view (near a door or building) and the system will find the nearest camera," said Davis. Where multiple cameras view an area, the system offers views from all. One camera can be aimed at a suspect running away while a second camera is likely to get a full-frontal view of the suspect and possibly, even a face shot. "For this we have to know the height of the building (to the camera mounting station) as well as its latitude and longitude," said Davis. They built a 3-D line from the camera to the spot of the incident.

"Originally, we used an X-Y grid on the ground," Davis continued. "But we found that more often than not, multiple cameras can view a location, and there is a difference between a camera view from an eight-story building versus a three-story building." Once a specific local view is displayed, operators can click anywhere within that view to quickly and automatically reposition the camera to that spot. In addition to the global and local views shown, a seamless 360-degree, static high-resolution panoramic image for each camera is provided to show the viewspace possible from each camera.

While the initial system is analog, they digitize all of the analog video and put it on the university's IP server. A DVR records images from every camera so security can pull DVRs for review. All video moves via USB connections.

Standard Pelco Spectra III Series domes cameras and Spectra IV PTZ units serve the test network. They are among the hundreds of cameras currently serving the campus. The university is interested in incorporating their research cameras into the main security network. Those stations are served by a mix of Pelco and Bosch cameras. The system will work with Pelco and Bosch cameras as well as products from Sony. The software is Windows XP-based and runs on Dell computers. Another challenge that the university faced with the design of the system was the choice between a hardwired-base or wireless interface. Davis chose to go with hardwired, rather than wireless, mainly due to possible interference.

The university does not yet plan to take the system beyond the OSU campus but would consider outside offers.