Tracking Protective Services Key Performance Indicators

Objective: Protective services operations are the first line of defense. When these operations are outsourced, the easy out for security managers is to have the vendor self-report their compliance with the service level agreement (SLA) and merely provide periodic counts of expended time, dollars and reported activities. This fails to provide essential information about what this time and money has yielded in risk management results. It is essential to establish a variety of key performance indicators to evaluate and qualitatively measure these critical security services.

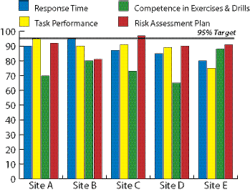

Risk Management Strategy: You can draw on more than 100 performance measures for protective services operations, but you need to select the key few that will tell your story or convey your message. In the example, instead of tracking simplistic training or invoicing measures that are useful for contract administration, we are homing in on four more qualitative measures that we deem critical in the vendor SLA for measuring the competence of a key element of the security program. Remember, the SLA process relies heavily on the vendor self-assessing, so several independently measured performance metrics is essential to management oversight.

A note about targeting: For measuring quality, speed and service performance, you should establish reach-oriented targets for both your proprietary and contracted services. In this example, we have established a 95 percent reliability target for the guard force vendor’s quarterly performance fee calculation. You can elect to apply any target, but some analysis of reasonableness is appropriate. For example, we should expect 99.9-percent reliability from our alarm system, but the same standard for across-the-board response time fails to take into account weather, traffic and other delay factors.

Response time: In the example, I am totally comfortable with defending a 95 percent target for response time for employee safety and critical area (high priority/high value) alarms. The meaningful metric for me is a 5-minute response time threshold from dispatch to arrival at the site of the call for service.

Task performance: This is admittedly a big bucket of expectations for a not-to-exceed 5 percent defect rate, but you would establish a short list of task categories that that would be incorporated into the metric. An example would be tested ability of trained first responders to successfully attend to incidents in their areas of expertise.

Competence in exercises and drills: A key indicator of likely success in risk event response may be found in the competence of protective service operations in planned (and unplanned) security exercises and drills. An effectively planned exercise can identify — under controlled conditions — a wide range of flawed plans and procedures, flawed training and supervisory needs. Since we often rely on vendor competencies when real risk events unfold, the results of a well-planned and documented exercise can deliver a rich inventory of qualitative vs. quantitative performance measures.

Risk assessment plan: A quality-focused SLA will hold the local vendor accountable for either developing and managing a comprehensive risk assessment plan or knowledgeably executing one provided by security management. Knowledge of risk and the countermeasures essential to mitigation is a fundamental expectation of any entity charged with enterprise protection. This is a process that can be measured and resulting metrics maintained by management for service agreement evaluation.

There is a flow-down effect from establishing the kinds of standards and expectations discussed here. If our vendor expects to succeed, they must have the demonstrated ability to provide meaningful, actionable performance metrics that result in an improved state of protection.

If we in security management expect to succeed, we need to engage in measurable vendor oversight rather than merely paying the bill after checking the box that the monthly count report has arrived on time. ?

George Campbell is emeritus faculty of the Security Executive Council (SEC) and former CSO of Fidelity Investments. His book, “Measures and Metrics in Corporate Security,” may be purchased through the SEC Web site. The SEC works with a faculty of more than 100 security executives and provides strategy, insight and proven practices to reduce risk and add to corporate profitability. To learn more, mail [email protected] or visit www.securityexecutivecouncil.com/?sc=std. The information in this article is copyrighted by the SEC and reprinted with permission. All rights reserved.

About the Author

George Campbell

George Campbell is emeritus faculty of the Security Executive Council and former CSO of Fidelity Investments. His book, “Measures and Metrics in Corporate Security,” may be purchased through the Security Executive Council Web site. The Security Executive Council is an innovative problem-solving research and services organization that works with Tier 1 Security Leaders™ to reduce risk and add to corporate profitability in the process. A faculty of more than 100 experienced security executives provides strategy, insight and proven practices that cannot be found anywhere else. Through its pioneering approach of Collective Knowledge™, the Council serves all aspects of the security community. To learn about becoming involved, e-mail [email protected] or visit www.securityexecutivecouncil.com/?sourceCode=std. The information in this article is copyrighted by the Security Executive Council and reprinted with permission. All rights reserved.