Eye on Video: Pixel-based intelligent video

There are a number of intelligent video (IV) applications available today to assist security personnel in analyzing surveillance video. These video analytics extract and process information from a video frame in different ways and apply varying decision-making rules for subsequent action. Before deciding which IV algorithm is best suited for a particular installation, it is important to understand the technical capabilities of each. Basically, video analytics fall into three broad categories:

- Pixel-based intelligent video applications analyze the individual pixels in the surveillance images and are typically used for video motion detection and camera tampering applications.

- Object-based intelligent video applications recognize and categorize objects in an image and are commonly used for classifying and tracking objects in the camera's field of view.

- Specialized intelligent video applications combine pixel- and object-based intelligence to process video for specific applications such as number/license plate recognition, facial recognition, and fire and smoke detection.

This month's article focuses on pixel-based intelligent video applications. Upcoming articles will discuss the remaining two categories.

Fundamentals of intelligent video

At a basic level, intelligent video analysis software analyzes every pixel in every frame of video, characterizing those pixels and then making decisions based on those characteristics. In pixel-based motion detection, for instance, the system triggers an alert when a specific number of pixels change in size, color or brightness.

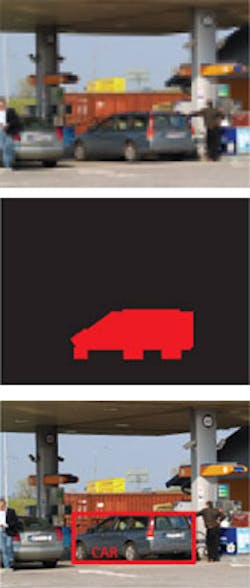

Blob recognition takes pixel change detection to the next level of intelligence. Essentially a collection of contiguous pixels that share particular characteristics, blobs have boundaries that delineate them from other parts of a video frame. Blobs can be identified and characterized as being particular object-such as a person or a car-by analyzing its shape, size, speed or other parameters. Object recognition applications employ the most sophisticated algorithms to classify and track the movement of specific objects (or individuals) within a video frame.

There are a number of factors to weigh in deciding whether to centralize this video intelligence at a server or distribute the intelligence processing to the surveillance system endpoints, such as the network cameras and video encoders. Each has its own pros and cons. (For more information on intelligent video architecture, see the previous article in this series: "Intelligent Video Architecture: Deciding whether to centralize or distribute your surveillance analytics.")

Motion detection

Video motion detection is the most prevalent pixel-based IV application in video surveillance because it reduces the amount of video stored by flagging video that contains changes and ignoring unchanged frames. This selectivity gives security personnel the option to store key video for longer periods on given storage capacity. The technique is also used to flag events, such as someone entering a restricted area, and send alerts to operators for immediate action.

How it works: In video motion detection applications, software algorithms continually compare images from a video stream to detect changes in an image. Early motion detection applications simply detected pixel changes from one frame of video to the next. While this schema certainly reduced video storage requirements, it was not very useful for real-time applications because it generated too many false alarms. Minor light changes, slight camera motion or even a tree swaying would raise an alarm.

Today's more advanced detection systems have the intelligence to excluded pixel-based changes from known sources such as naturally changing light conditions based on the time of day, or other known and unthreatening repetitive changes in the camera's field of view. With the exclusion of these regularly-occurring phenomena, the number of false alarms has dropped dramatically. These advanced systems also have the intelligence to group pixels together to constitute a larger object, such as a person or car, which further decreases the number of errors in motion detection.

Balancing parameters

Detecting relevant motion in a scene is a matter of finding the right balance between parameters. You can set the threshold for how large an object needs to be for the system to trigger an alert. You can decide how long a time the object needs to be moving in an image before it stops triggering the system. You can even decide how much an image can change before the system reacts. Advanced network cameras often allow you to place a number of different windows for motion detection within a viewing field. You can then define each window separately according to different parameters. This is particularly valuable when you know the viewing field regularly includes both stationary and mobile objects.

Tampering detection

Camera tampering detection is another key pixel-based IV application incorporated in surveillance systems to protect the integrity of video transmissions. Without it, a camera's view can become obstructed or deliberately altered causing significant threats to go unnoticed and completely unusable video to be recorded and stored.

How it works: The tampering detection feature distinguishes the difference between expected changes in a camera view and unexpected changes due to tampering. It can detect whether the lens of the camera is being blocked by an errant tree branch or covered by paint, powder, moisture or a sticker. It can determine if the camera has been redirected to a view of no interest to security. It can even detect if a camera has been severely defocused or actually removed. Some camera tampering detection applications automatically learn the scene, making setup very easy.

Camera tampering detection is used most prevalent in environments prone to vandalism-such as schools, prisons or public transportation venues-where someone is more likely to intentionally redirect, damage or block cameras. Detection occurs in seconds, generating an alert to correct the situation. Without this ability, it could take months before surveillance operators discovered that a camera was pointed in the wrong direction and that archived footage was useless.

Pixel-based image enhancement

Weather conditions naturally affect the quality of video images in outdoor surveillance. Fog, smoke, rain and snow create a challenge for security personnel to effectively monitor scenes and adequately identify people, objects and activity. Adjusting brightness and contrast do little to improve image clarity. With advanced intelligent video algorithms, however, you can analyze video streams and detect typical distortions caused by bad weather. The software applies real-time enhancements to the image, restoring it as much as possible to what it would have looked like without the distortion. The result is a video stream with substantially improved image quality.

Pointers for real-world deployment

To ensure that your pixel-based intelligent video application works accurately you need to address three factors: the video image quality, the efficiency of the advanced algorithms, and the processing power required.

- Video image quality. High frame rates and high resolution are not necessary. Pixel-based analysis functions quite well at five to 10 frames per second and in many applications CIF resolution (about 0.1 megapixels) is sufficient. Camera placement is critical though.

- Algorithm efficiency. The only way to assess the quality of an IV algorithm is to field test the application under realistic conditions. Run a pilot in the environment to determine how fast it is and how many correct responses and false alarms it generates. The number of acceptable errors or missed "true positives" will depend on the safety and security concerns of the user.

- Computer processing power. Since IV applications are mathematically complex, they require more computing power. How well they perform depends heavily on the processors used and the amount of memory available. The more processing power available, the faster they will be able to perform.

Setting realistic expectations

It is important to understand that IV applications are not infallible. But with careful adherence to some best practices, users can achieve between 90 and 95 percent accuracy. When deploying this technology, you need to set realistic expectations. Reaching 95 percent accuracy is very challenging; achieving 99 percent or beyond can be extremely difficult and costly in a real-world environment. Configuring a system is a delicate balance between not missing essential situations and reducing false triggers. Monitor and adjust your configuration over a 24-hour period to account for changes in lighting that might impact results. Understand that the more parameters you adjust in an application, the longer it will take to optimize-anywhere from a day to several weeks. The cost of the IV system will also have to be weighed against other surveillance alternatives, such as employing more security personnel. And accept that no system will ever be 100 percent accurate.

About the author: Fredrik Nilsson is general manager of Axis Communications, a provider of IP-based network video solutions that include network cameras and video encoders for remote monitoring and security surveillance. This story is part of Mr. Nilsson’s “Eye on Video†series appearing in ST&D and on SecurityInfoWatch.com and IPSecurityWatch.com.