Accessed & Compromised: An Interview with a Hacker

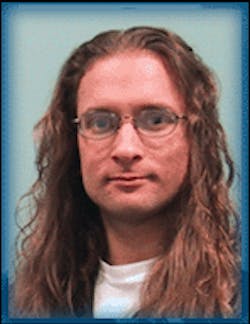

[Editor's Note: SecurityInfoWatch.com recently had the ability to interview former hacker turned elite computer scientist Peiter "Mudge" Zatko. Mudge, a division scientist at BBN Technologies in Boston, Mass., shared his thoughts on network connectivity. As our industry starts to move toward IP-based solutions, we thought you might want to know what a former hacker thinks about today's network security.]

Peiter, you've claimed before that elite hackers could take down the Internet in 30 minutes and keep it down for days. Is that scenario still a possibility?

Several of the attack scenarios that I referred to at the Senate hearings where I stated it was possible to take down the Internet in 30 minutes are still viable today. The technology has not changed, nor can it change easily with the dependence we have on the Internet and its current underpinnings. With that said, there has been a subtle change that would increase the amount of time some of the attacks would take to engage in. Back in the latter '90s there were only a handful of major peering points. MAE East/West (Metropolitan Area Ethernet) and the NAPs (National Access Points) were good examples of condensed peering points (a peering point being a location where service providers interconnect to hand off traffic destined to subscribers of other service providers). For attacks on the Internet routing protocols these dense areas were (and are) very advantageous for the attacker.

Since the 1990s there has been a move towards more decentralized peering points and private exchanges. The various attacks still work, but it would more likely take around 1-2 hours and be (only) slightly more difficult to sustain for a duration of several days.

If the Internet could be crashed in a couple hours, how long do you think it would take elite hackers to break into today's surveillance systems that are often distributed inside of companies over the same network lines they use to send emails and connect servers and to the Internet?

There is a difference between the difficulty involved in disrupting service and compromising the confidentiality, integrity, and authenticity. The compromise actions usually requiring slightly more finesse and work. This is not to say that it is impossible or even difficult in many cases. However, the compromise actions can usually be automated once they have been tested and known to work for particular scenarios.

During the Clinton sex scandal hearings, a great deal of effort was made to secure the video feed of then President Clinton's testimony. They were aware that if they used public communication lines for the video feed that people other than the intended viewers would be very interested in viewing these communications. Many video streams, even for surveillance purposes, are not encrypted or protected in any meaningful fashion. Hence it is trivial for interlopers to monitor these transmissions.

In the scenarios where video is being transmitted via TCP/IP streams the act of disrupting these streams is the same as disrupting standard network communications.

In your opinion, how prevalent is hacking into networked video?

There are automated tools to make copies of networked video freely available on the net today.

What are the motives for that kind of activity?

The motives are varied. When dealing with any particular case one must factor in not only the motives (such as curiosity, financial gain, intelligence gathering, etc.) but also the risks and opportunities associated with the action(s) of 'hacking' into a video session. This is referred to as a ROM (Risk, Opportunity, Motivation) model.

If someone is alerted that their video over IP system has been accessed, what can they do as an immediate, 30-second response to control the situation? Will that response mean temporarily having to shut down the surveillance system and disconnect it from the network?

Any action that I could advise upon without a context around it would most likely be incorrect. In some situations the correct response might have to be immediately disconnecting or stopping the transmission. In other scenarios the correct solution might be to communicate out of band from the video transmission and allow it to continue. In yet other cases intentionally falsifying the stream to mislead the interloper might be the prudent action. No matter what, the security response taken needs to be correct for that environment. This is one of the reasons I have become very disappointed with many third party vulnerability warnings and ranking schemas. Without knowledge of the value something is to my operations, it is remiss of people to apply seemingly arbitrary severity levels to autonomous technology threats. Worse yet, it is often misleading to people in that it might improperly elevate or degrade the perceived risk to a particular theater of operations.

What safeguards are on the horizon that an end user will be able to employ to deter and prevent unauthorized intrusion?

I have not been impressed with recent solutions from the industry on the defensive side. Oftentimes if an environment, or surveillance deployment, is thought through before being deployed then a large part of the risk and opportunity is mitigated at the outset. Unfortunately, too often people do not think about the environment they are deploying and simply use the most convenient and easiest solutions.

I am reminded of several wireless access point installers setting up commercial installations in the default entirely open and insecure settings. When asked why they did not enable any of the protective mechanisms and settings that the products offered they responded that nobody cared about it. Most likely, the recipients of these deployments did not know that their networks were being setup in entirely open/insecure modes and thus were accepting more risk than they were aware of. Similar situations have been stumbled across with wireless security video feeds inside biotech and other industries. These feeds are trivial to pick up with current Icom, or similar, radios.

The use of multi-factor authentication, i.e., such as having to provide a password and a fingerprint scan (and sometimes even the presence of a "smart" access card), is all the buzz in the industry right now and is being used by the companies that understand the importance of security on company networks. IBM even recently added the fingerprint scan to select versions of its ThinkPad series. What are you seeing in terms of trends for this kind of authenticated access? Will multi-factor authentication for corporate networks become a standard in the near future?

There are primarily two ways to approach biometrics: authentication, and identification. In the authentication approach the user states who they claim to be and then provides a biometric input. If the input is within an acceptable deviation of the stored "signature" in the database then it is accepted. If not, then it is rejected. With the identification approach the "signature" is compared with a database of stored "signatures" in an attempt to find a match (hopefully only one) within the accepted deviation allowance. While these approaches might seem obvious, it is surprising how many vendors use the wrong approach for their intended purposes.

I feel there is promise in this area but a fair amount of progress still needs to be made. When dealing with biometric devices I am more comfortable when there is an actual unique challenge/response mechanism in place and that the underlying database of information and the communications channels in use have been vetted and are well understood. After all, how many times can you change your password if it is your fingerprint and it is being stored unprotected or transmitted across the network in "cleartext"?

After years as a hacker, and a software developer and now a leading consultant, in your opinion, is the belief in a "secure network" really just an oxymoron?

Without an understanding of what it is that is attempting to be secured, how the network needs to be accessed to conduct business, why it needs to be secured in the first place, and what the ramifications, remediation, and reconstitution efforts would need to be if it were to be compromised... then "secure network" is not just an oxymoron, it is a non-sequitur.

Do you ever foresee a time when being a hacker will be as antiquated as being a blacksmith? What would it take for that to happen, or is that scenario even remotely plausible?

Not for the definition I have always attached to the phrase "hacker". From Eric Raymond's Jargon File a hack is described as follows (and I don't think either definition will, or should, ever become antiquated):

---

The Meaning of 'Hack'

"The word hack doesn't really have 69 different meanings," according to MIT hacker Phil Agre. "In fact, hack has only one meaning, an extremely subtle and profound one which defies articulation. Which connotation is implied by a given use of the word depends in similarly profound ways on the context. Similar remarks apply to a couple of other hacker words, most notably random."

Hacking might be characterized as 'an appropriate application of ingenuity'. Whether the result is a quick-and-dirty patchwork job or a carefully crafted work of art, you have to admire the cleverness that went into it.

An important secondary meaning of hack is 'a creative practical joke'. This kind of hack is easier to explain to non-hackers than the programming kind. Of course, some hacks have both natures.