The Move to Enable Proactive AI in Security Operations

Can technology enable predictive and preemptive security operations? This is not a new question, and it is an especially valid one now.

Q: I’ve been reading that artificial intelligence (AI) can help establish predictive and preemptive Security Operations, instead of only reacting to incidents. Is that true?

A: AI is already being used for predictive, preemptive and proactive electronic information security. The same types of AI technologies can be applied to physical security.

This column explains how other industries have already been using AI for predictive and preemptive operations of various kinds. The security industry is just getting started with that kind of AI, but the emerging AI companies can explain how their products support predictive and preemptive physical security operations.

Cognitive Security

The information security world has been using AI for several years. TechTarget’s Whatis.com website calls it Cognitive Security, defined as “the application of AI technologies patterned on human thought processes to detect threats and protect physical and digital systems.” Whatis.com explains, “Machine learning algorithms make it possible for cognitive systems to constantly mine data for significant information and acquire knowledge through advanced analytics. By continually refining methods and processes, the systems learn to anticipate threats and generate proactive solutions. The ability to process and analyze huge volumes of structured and unstructured data means that cognitive security systems can identify connections among data points and trends that would be impossible for a human to detect.” One of the long-time objectives of AI is to do things faster and better than a human.

Proactive AI-Based Operations

AI research and development have been going on since the 1950s, but the state of computing wasn’t good enough to achieve the kind of data processing that AI required. That’s why most AI applications have been narrow in their scope (such as text recognition) rather than broad and general purpose. What has enabled the AI accomplishments of recent years has been exponential advances in computer processing power.

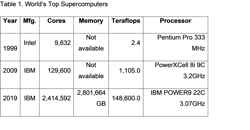

See the comparison chart in Table 1 below of the computing power of the world’s top supercomputers today versus one and two decades ago. A supercomputer is a computer with a high level of performance compared to a general-purpose computer. The performance of supercomputers was originally measured in floating-point mathematical operations per second (FLOPS), but today’s supercomputer performance is measured in Teraflops – 1,000 FLOPS.

AI Requires Parallel Processing

AI needs parallel processing to, for example, run hundreds or thousands of what-if scenarios in real-time, keeping track of what’s going on so that it can predict the outcome of the equipment or activity it is tracking. About five years ago, both Boeing and General Electric jet engine systems began sending real-time engine status and diagnostics information to their cloud databases, where AI applications analyze the data.

The companies notify their airline customers’ service departments of service required so that service technicians can be ready and waiting with the exact parts and tools needed to perform service the instant the plane lands. This approach reduces jet fuel consumption, extends the service life of the engines, and eliminates incidents of unplanned aircraft downtime. This is an example of predictive, preemptive and proactive maintenance operations.

AI Anomaly Detection

A key factor in AI predictive and preemptive analysis is anomaly detection. An anomaly is something out of the ordinary – not normal. For example, AI software for jet engines learns what’s normal for an engine in all weather conditions, at various altitudes and geographies, as well as various speeds and during ascent and descent. It knows the normal ranges of the hundreds of jet engine data points in the various flying situations.

I know how valuable anomaly detection can be for physical security, as I experienced this performing manual anomaly detection with the Briefcam video analytics software (www.briefcam.com) several years ago. I reviewed eight hours of video of stairwell activity at a community college in 15 minutes. In its video synopsis playback, Briefcam fit the people together as close as possible as they moved down the stairwell.

Right away, one individual stuck out from the others because he had his hat tilted down and he kept his face turned away from the camera. His behavior stood out from that of others on the stairway. He had walked down the stairway alone, and in the normal video review, it wasn’t apparent at all that his behavior was anomalous. It only appeared that way in contrast to the others.

In one of the video frames he is clearly holding the stolen laptop computer, but a single frame was not standing out in normal video review. In recent years Briefcam has added advanced AI capabilities and now some of its anomaly detection is automatic.

Recently I talked to the folks at Vaion (www.vaion.com), one of the emerging physical security AI companies, to find out how an AI video analysis platform like theirs would learn “what’s normal” in an actual deployment. Tormod Ree, the CEO from Oslo, Norway, explained that their AI software applies all the selected video analysis capabilities to all of the cameras all the time. This is how it figures out what’s normal for all conditions of the facility, any time day or night, across all activity. It’s how the software achieves full facility situational awareness – meaning what’s going on that normal and not normal.

AI Machine Learning Training

Ree further explained that what’s normal is established using two steps common to most AI applications. First is the deep learning training step, whereby the Vaion AI software examines several hundred to a million images, to understand what kinds of things to look for when deployed. This can take several days.

AI Deep Learning Inference

When the trained AI software is deployed, the next step is deep learning inference. The inference is a conclusion reached based on evidence and reasoning. I’ve skipped over a description of the deep learning aspects, which you can read about in an excellent blog article on the Nvidia website titled, “What’s the Difference Between Deep Learning Training and Inference?” (https://bit.ly/ai-inference).

Over a period of one week or several, the AI software examines all camera activity and learns what’s normal for the facility based on evidence, comparing what it’s been trained to see against what it is seeing. Over time it concludes what is normal and identifying things that don’t seem normal enough. These are anomalies, and they are brought to the attention of a human operator. The operator can confirm that it’s an anomaly or classify it as normal.

Current State of AI Applications for Security Operations

Right now, most emerging AI companies are engaging in pilot projects with prospective customers, increasingly fine-tuning their AI product performance in each deployment. Most provide product demonstrations, and the upcoming ASIS GSX conference in Chicago (www.gsx.org) is also a good way to see the AI platforms in action. Be sure to ask about what security operations risk scenarios their product can perform predictive, preemptive and proactive actions, and brainstorm a little about the kind of scenarios that could work for you.

About the author: Ray Bernard, PSP CHS-III, is the principal consultant for Ray Bernard Consulting Services (RBCS), a firm that provides security consulting services for public and private facilities (www.go-rbcs.com). In 2018 IFSEC Global listed Ray as #12 in the world’s Top 30 Security Thought Leaders. He is the author of the Elsevier book Security Technology Convergence Insights available on Amazon. Mr. Bernard is a Subject Matter Expert Faculty of the Security Executive Council (SEC) and an active member of the ASIS International member councils for Physical Security and IT Security. Follow Ray on Twitter: @RayBernardRBCS.

About the Author

Ray Bernard, PSP, CHS-III

Ray Bernard, PSP, CHS-III, is the principal consultant for Ray Bernard Consulting Services (RBCS), a firm that provides security consulting services for public and private facilities (www.go-rbcs.com). In 2018 IFSEC Global listed Ray as #12 in the world’s top 30 Security Thought Leaders. He is the author of the Elsevier book Security Technology Convergence Insights available on Amazon. Ray has recently released an insightful downloadable eBook titled, Future-Ready Network Design for Physical Security Systems, available in English and Spanish.

Follow him on LinkedIn: www.linkedin.com/in/raybernard.

Follow him on Twitter: @RayBernardRBCS.