Surfaro on the Scene at CES 2023 - Part 2: Sensor Fusion’s Role in the Smart City

In the past year, we have covered sensor fusion’s role in collecting visual data, or “seeing” better as humans. With sensor fusion, computer vision algorithms are run on sensor data, including from LEDs, thermal imagers, radar, LiDAR, 3D ToF (Time of Flight) cameras and more. The combined, real-time visual intelligence is sensor fusion, and it improves our ability to react and investigate in many use-cases.

For example, Tesla Vision currently uses visible light and machine learning. Will this be augmented by LiDAR, radar or even HD thermal imaging for sensor fusion? Although Tesla's plans were not finalized at CES 2023, they will encourage other industries to take advantage of economies of “sensor fusion” scale, while investors watch what Elon Musk plans to do about other initiatives.

At CES2023, smart cities and smart buildings-focused exhibitors incorporated safety benefits from sensor fusion providers. Here’s a closer look:

Owl AI: 3D Thermal Imaging Brings Scenes to Life

The 3D HD imaging and precision ranging by Owl AI’s high-resolution thermal imager reminds me of Neo's awesome power of three-dimensional digital constructs of people that “pop out” of the Matrix-scape. Even the company logo fuses Owl’s artificial intelligence with autonomous imaging.

“The most determinant variable in the autonomous market will always be safety,” Owl AI CEO Chuck Gershman told me. “Manufacturers will continuously be tasked with determining which technology solutions most effectively mitigate liability risks and cost for safe operation.”Owl AI’s 3D Thermal Ranger is technically a 3D monocular thermal imaging solution that provides HD imaging of “life in motion” pedestrians, people, pets, bicyclists, and the entire V2X vehicle ecosystem. It is a 150x resolution improvement and cloud density of current LiDAR sensors.

Day/night, all-weather object classification means calculating position, direction and speed to unlock safe autonomous and semi-autonomous operation. Owl AI’s panoramic thermal imaging solution produces dense range maps superior to current LiDAR or radar technology, at similar integrated cost, and is immune to vehicle vibration. This technology will enhance and revolutionize sensor fusion in city overwatch, perimeter protection, campus safety and border protection.

Watch or visualize a traffic intersection and imagine the spatial neural network required to track cars, bicycles, handicapped people and runners. This 3D scene of living and mechanical objects in motion brings to mind a FlightAware-type display of aircraft vectors pointing in different directions and moving at changing velocity and acceleration. This is why their imaging chipset is joined by a powerful processor like the NVIDIA Jetson.

Back in 2017, I worked with Jack Hanagriff, who at the time was Critical Infrastructure Protection Coordinator for the Houston Urban Area Security Initiative, to deploy standard resolution thermal IP cameras as “overwatch” with visible light PTZ cameras at a Super Bowl-related event in the city. This aided first responders to locate medical emergencies in a crowd at the co-located concert event. The use of an imaging device with the 3D Thermal Ranger would have vastly improved operational response. www.owlai.us

AEye: LiDAR and AI for Perimeter Security

The availability of a wide range of sensor fusion choices will dramatically improve the safety and experience for pedestrians, bicyclists, runners, delivery personnel, construction workers and, of course, drivers in smart cities.

AEye’s CES exhibit demonstrated a crosswalk and microcosm of a city packed with visual intelligence that creates a path to V2X communications. As a total solution provider, AEye provides computer vision, AI software, and software-defined LiDAR. In a recent test at theI met with Matt Bretoi, AEye’s Senior Director of Smart Cities, who described how their unique software works directly with their LiDAR sensors to provide rich data on a wide range of scenes.

“The LiDAR in this demonstration is looking out to this [exhibit] crosswalk,” he explained. “What kind of data do we want? How many people will pass by? Here, we cumulatively totaled 1,981 people coming though the crosswalk area, and averaged 1.23m spacing apart from each other. We can measure a subject’s height for classification. That is the kind of precision that LiDAR provides.”

In the security industry, LiDAR’s deployment represents a great opportunity to improve detection, at a far lower cost than perimeter cameras. “In perimeter security, you are trying to decide if an object is an intruder from a 2D image,” Bretoi said. “With LiDAR, from the 3D perspective, we can look at every object with great precision, object orientation and actual distance.”

AEye’s LiDAR is adaptive. Bretoi said it can be optimized for a use-case, such as pedestrians at an intersection, at an event, airport or looking down a perimeter security fence line. www.aeye.ai

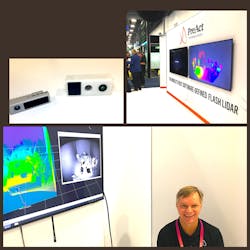

PreAct Technologies: Sensors Small Enough to be Placed Anywhere

As I met with PreAct CEO Paul Drysch, he literally looked like a kid in a candy store as he showed me his line of advanced, compact, even miniature LiDAR and ToF sensor units that could be placed anywhere to detect virtually anything.Most of them may be used in security and public safety. Their autonomous vehicle counterparts are custom fitted into the vehicles, so the housing is the only difference.

“We’re the world’s first software-defined LiDAR; we can vary the waveform algorithm, and many features over time,” said Drysch, who was showing me the PreAct T30P Flash LiDAR. “For Example, the automatic door actuation feature uses a LiDAR unit that recognizes, verifies, opens the door, and looks for objects or obstacles to avoid.” www.preact-tech.com

AEVA: Adding a Fourth Dimension to LiDAR

On the moon, there is no GPS, so when LiDAR specialist AEVA Inc. began working with NASA for a lightweight method of mapping landing areas, another feature was uncovered – the ability to detect clouds of dust. At the Potrillo volcanic field-testing site in New Mexico and other desert locations across the Southwest, AEVA’s Frequency Modulated Continuous Wave (FMCW) LiDAR can detect the forward propagation or velocity of the “haboob,” or enormous walls of dust at up to 37 mph.

One year later at CES2023, AEVA introduced the next-generation Aries II FMCW LiDAR Sensor with built-in 4D Perception Software, detecting instant velocity, position, and up to a camera-grade 1,000 lines per frame with “ultra resolution.”

At its booth, AEVA’s Michael Oldenberger explained how Aeries II can detect cars at 500 meters and pedestrians at 350 meters. “LiDAR produces precise info about where in 3D space objects around the sensor are,” he said. “What’s unique about AEVA is that we bring the fourth dimension into the equation, the velocity layer. Traditional LiDAR must infer velocity, looking across multiple frames, detecting position changes to calculate velocity. We detect it directly.”

Instead of Time of Flight (ToF), Aries II uses a low power continuous beam at a specific frequency, measuring the doppler shift when it returns. www.aeva.com

NoTraffic: Intelligent Traffic System Solutions

City managers don’t just want vehicles to avoid street crossers and cyclists; they are demanding the application of transportation policies, so they keep vehicles moving through what were once congested business districts, even if a sinkhole and construction crew appear to seemingly challenge a great day.

Israeli startup NoTraffic is on a mission to digitize urban intersections with a real-time, plug-and-play autonomous traffic management platform that uses AI and cloud computing to reinvent how cities run their transport networks.

“The discipline of moving traffic has actually been the same for the last hundred years,” explained VP of Sales Thomas Cooper. “Now, intelligence will have the infrastructure react in real-time to the demand in accordance with policies we set – for example, traffic flows smoothlyLegacy systems count cars and pick fixed traffic signal timing plans. “With NoTraffic’s sensors and platform, the timing, lane functions and speed limit all automatically adjust to avoid congestion,” Cooper said.

The NoTraffic sensor looks like a camera, but it is really a sensor fusion, multi-spectral, multi-core processing unit that uses algorithms to move traffic more effectively, while still managing pedestrians, cycling and runner safety.

This is not a new concept; in fact, companies like Rightcrowd have been positively impacting corporate “citizens” through access control policies delivered through pliable technology.

Perhaps the most incredible life-saving use of this “hive mind” sharing info between many intersections is the Red-Light Runner Accident Prevention Policy. “A driver approaching the intersection with a soon-expiring green light is determined to want the run the red light,” Cooper explains. “The system sends a warning to the stopped vehicle to ‘stand by: red light runner vehicle detected.’ After the threat passes, signals in the surrounding area return to normal policy.”

As I wrote in the August 2022 Security Business magazine cover story, intelligent transportation is one of the less-traveled paths that can enable an integrator to break into the smart city market. Policy creation using technology like Notraffic’s is an important technology to enable that goal. https://notraffic.tech

Steve Surfaro is Chairman of the Public Safety Working Group for the Security Industry Association (SIA) and has more than 30 years of security industry experience. He is a subject matter expert in smart cities and buildings, cybersecurity, forensic video, data science, command center design and first responder technologies. Follow him on Twitter, @stevesurf.

About the Author

Steve Surfaro

Steve Surfaro

Steve Surfaro is Chairman of the Public Safety Working Group for the Security Industry Association (SIA) and has more than 30 years of security industry experience. He is a subject matter expert in smart cities and buildings, cybersecurity, forensic video, data science, command center design and first responder technologies. Follow him on Twitter, @stevesurf.